- This articlehas been translated from this article by DeepL translation. Sorry if it’s hard to read.

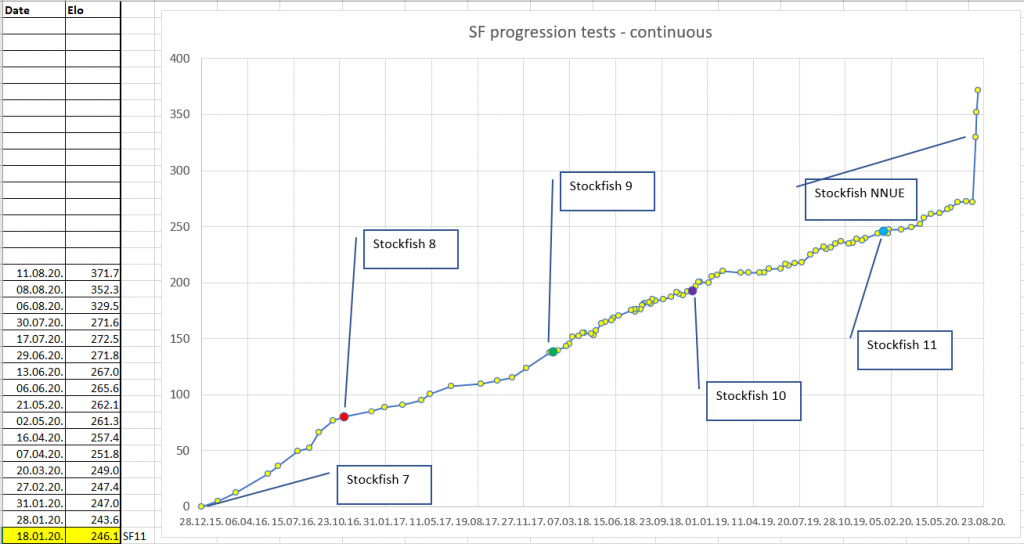

The NNUE evaluation function has been ported to Stockfish and has had a noticeable effect.

Last year, I said, “NNUE evaluation function is a technology that would probably make Stockfish R100-200 stronger if it were imported back into Stockfish. The words I said on the dotted line have come true without a figure.

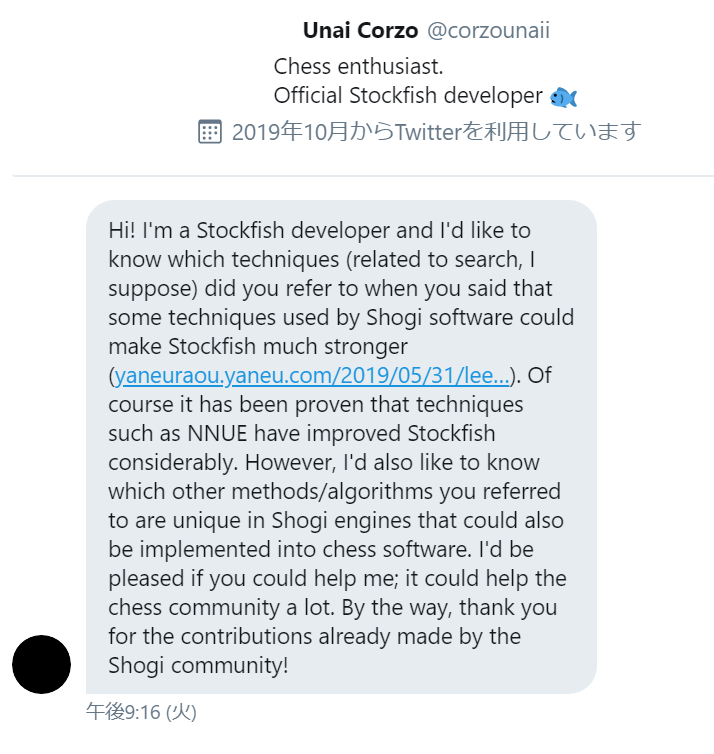

As a result, he said, “Now, what you said has been proven. Let me know what other shogi software techniques you use! I’ve been getting a lot of inquiries like.

So, I’d like to write about 3 technologies in shogi AI that I think would be effective if I brought them to Stockfish.

1. stochastic optimization

Mr.Tanuki-(@nodchip) used Hyperopt for automatic optimization of search parameters at WCSC27.

- WCSC27 tanuki-team PR document : http://www2.computer-shogi.org/wcsc27/appeal/tanuki-/appeal.pdf

Now is the time to use Optuna, developed by PFN.

Optuna : https://github.com/optuna/optuna

Instead of adjusting the search parameters one by one, we want to adjust them all together by stochastic optimization, including various pruning on/offs, network size of evaluation functions, etc.

2. switching the evaluation function.

The reinforcement approach to the evaluation function requires increasing the depth of the teacher, but this quickly reaches its limit as the rating increases only slightly with the exponential increase in computational resources required.

Compared to Deep Learning-based evaluation functions like AlphaZero, the expressive power of the NNUE evaluation function is poor, so it fails to return a reasonable evaluation at every position.

It is important to prepare different evaluation functions for each phase, such as different evaluation functions for the beginning, middle, and end of the game, different evaluation functions for each battle type, or different evaluation functions according to the difference of evaluation values.

3.automatic generation of opening book

YaneuraOu (WCSC29 winning software) uses a technology called tera-shock opening book, which automatically generates the book itself.

How to generate tera-shock opening book : http://yaneuraou.yaneu.com/2019/04/19/%e3%83%86%e3%83%a9%e3%82%b7%e3%83%a7%e3%83%83%e3%82%af%e5%ae%9a%e8%b7%a1%e3%81 %ae%e7%94%9f%e6%88%90%e6%89%8b%e6%b3%95/

YaneuraOu WCSC30 PR document : http://yaneuraou.yaneu.com/2020/03/28/%e3%82%84%e3%81%ad%e3%81%86%e3%82%89%e7%8e%8b-wcsc30-pr%e6%96%87%e6%9b%b8/

This is a rough way of putting it.

1) Exporting the results of long time thinking in each position of a given game record to a opening book database

2) Re-generate the opening book tree by performing a min-max search from the initial position of the game, using each position in the DB as the leaf node of the game tree.

Conclusion

In the meantime, I’ve chosen three things that seem to work immediately. I don’t know if they really work on the chess side 😅.

https://www.chessprogramming.org/Stockfish_NNUE#cite_note-27

この記事那須さんが書いた扱いになってるっぽいです??

それがご本人かどうか…?